Syntheva presents EPIA NPUs

11 November 2025

Full article is here.

We're excited to share EPIA — an open, 64-bit NPU architecture designed for real on-device AI. And before you discard it as "yet another edge AI NPU", hold on - EPIA supports MASSIVE models - we're talking 7B at the very bottom, scaling up to 160B easily. The architecture itself supports much bigger, multi-trillion parameter models, but the hardware implementations might not yet be up to that scale.

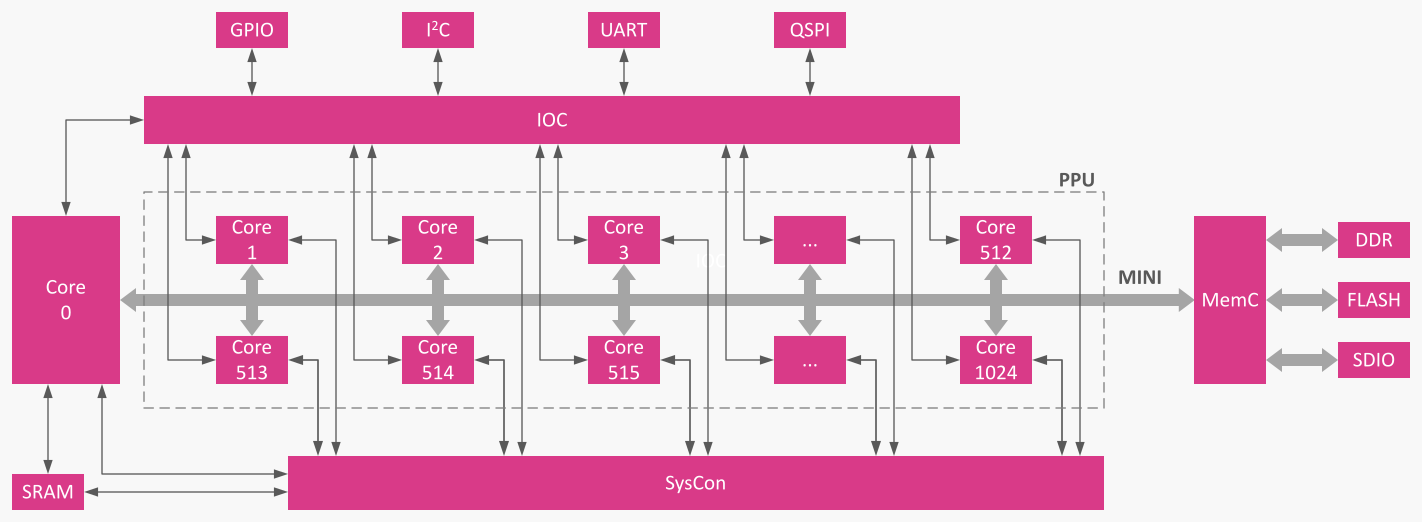

EPIA is lean, predictable, and made for the moment: robotics finally needs private, offline intelligence with deterministic latency and no vendor lock-in. We built an orthogonal ISA, a Scaled Dot-Product Unit for low-bit inference, and a simple PPU code flow fan-out model so parallel work is obvious and efficient. The MINI bus, MemC, and IOC make it easy to stream tensors, page models from flash/SD, and control robotic body modules without massive driver stacks.

Why this matters: teams building humanoids and other robots need autonomy, auditability, and an ecosystem they can extend — not heavy closed GPUs and cloud dependencies. By open-sourcing EPIA we want a RISC-V–style community: toolchains, compilers, cores, and real hardware contributions from everyone.

Typical deployment? One or more EPIA chips on the robot: vision (VLM), speech (ASR/TTS), reasoning (LLM + action models) and control pipelines — all local, scalable from a single chip to many, with predictable latency and full data privacy.

If you care about on-device autonomy or want to help shape an open NPU stack, let's collaborate!